Many people in Utility Vegetation Management (UVM) believe that sampling is less accurate than a full inventory count when it comes to understanding a system; this is far too simplistic a view and is actually far from accurate as a statement.

More accurate would be to say that: if what you want to know is the total count and proportional composition of the current workload then an inventory will be more or less accurate – given nothing changes on the system during the time of data collection.

However, the cost of an inventory for a UVM system is about 20 times the cost of a sample and will only be (at the most) 5% more accurate than a sample so it doesn’t make much sense from a financial standpoint; but it also makes little sense from a management standpoint.

Understanding every detail of the current workload is not the same as understanding the system that the workload is a part of. To understand the dynamics of the system actually requires a counter-intuitive approach of throwing granularity of data away.

This is because too much data can be as bad or worse than too little data when it comes to inference regarding the forces that are shaping it. In essence the mistake being made in the inventory approach as compared to the sampling approach is that more data tells us more about the system. It is a falsehood because the system is in constant flux.

To assume that the composition of a UVM workload is as it stands now and will continue to be so is an assumption that no UVM manager would agree to, yet workload inventory is starting with that assumption as a premise. In reality, the current state of the system is just one of many states it could be in, so understanding the system ‘exactly’ is pointless from a management perspective unless the cycle the system is on is incredibly short.

Another issue is the redundancy of the data. How will all that extra data be useful in predicting the future state of the system, or the steps that will be necessary to create a desired state through intervention when the only difference in accuracy is 5%? That level of change to the system could happen in one storm alone, let alone the effects of disease, owner intervention or natural in-growth over time.

Then there are the problems associated with the models built from that data. Any modeler worth their money would actually sample the inventory to gain accuracy in the predictive power of the model. The reason is something called “over-fitting”.

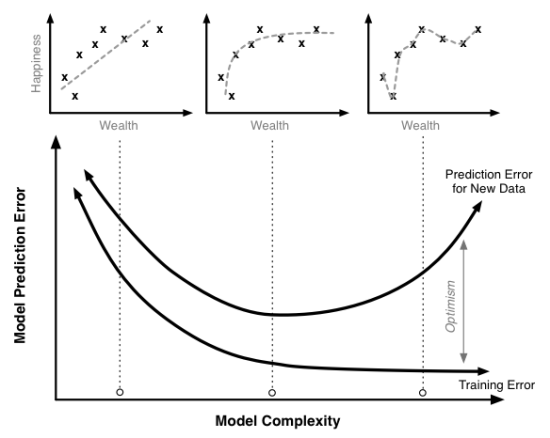

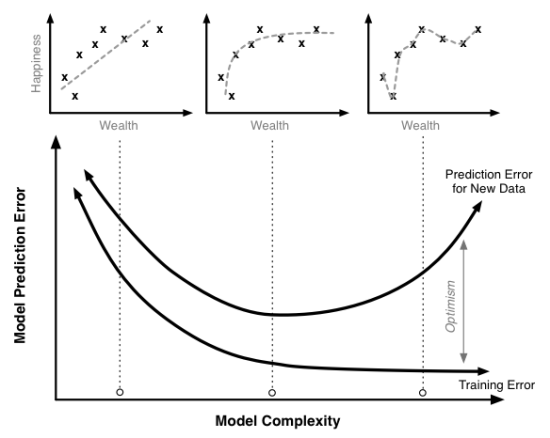

Modeling is about creating sense from patterns. In the graphs above (author: Scott Fortman-Roe) we have a relationship being plotted of how wealth increases the perception of happiness, we can see intuitively that the more wealth we have the less it affects us, and that it is a non-linear decreasing impact.

The top left graph shows a linear relationship, which shows a poor fit to the data; a low R-sq value. The level of prediction error is high.

The middle graph shows a much better non-linear fit and the level of prediction error, as expected, is much lower.

However, the top right graph shows an incredibly high level of fit to the data but its level of prediction error is again very high. This is an example of over-fitting the model to the data. Essentially, what is happening is that the model assumes that the state of the system is static over time instead of variable over time, so that the fit of the model is too representative of the current state, as though the current state is free from error variation and is representative of all future states of the system.

This is empirically untrue. UVM systems are full of error variation; they are constantly in flux and are constantly being affected by environmental, societal and systematic human interference. In order to understand them we need to understand what shapes them and it is actually counterproductive, in light of this objective, to understand the current state by understanding its exact proportional categorical quantities because we need to understand the inherent variation of those categories in order to understand possible future states rather than an in-depth view of its current ‘snapshot’ in time.

It follows then that more data from a management point of view is simply pointless because it exaggerates the importance of a snapshot in time where flux is a natural state of the system; it would be necessary to ignore the ‘accuracy’ because the accuracy is actually a lie; there is no such thing as an accurate representation of the state of a system, only a current state of a system: one of many such states it could transform to and inevitably will transform to over time. As such the level of description from an inventory is actually fleeting and as such is pointless information for management purposes.

Taking an inventory is only useful when doing work planning for contractor crews; it is not useful for understanding the system, the dynamics of it or for setting management objectives. In terms of the latter, it is actually a less accurate depiction of what a UVM manager needs to know which are the intervention strategies that would shape the future state of the system. For that, we can gain more information for a fraction of the price, which logically means that 90+% of the cost of an inventory is a waste of money.

~Richard Jackson

The Arborcision/ACRT booth at the Expo

The Arborcision/ACRT booth at the Expo